I think the best way to answer this question is to look at it from both the humanities and the technical side. The two thinkers who helped me answer it most clearly are Nina Beguš and Maria Antoniak. They come from two worlds that need to be in dialogue. Nina Beguš is a literary scholar trained in Comparative Literature at Harvard whose work asks what happens when questions about authorship, language, agency, and even “the human” are increasingly being shaped inside technical fields. Maria Antoniak is a computer scientist at the University of Colorado Boulder whose research in natural language processing, cultural analytics, and interpretation looks directly at the relationship between language technology and culture. So this is not just an arts argument reacting to technology from the outside. It is a serious interdisciplinary argument coming from both the humanities and computational fields.

What I take from Beguš is that Fine Arts and the humanities should not leave this conversation to engineers, platforms, and product teams alone. Her core argument is that AI is not only a technical system. It is also a cultural and philosophical one. It changes how we think about meaning, authorship, representation, and human agency. She argues that if those questions are framed only inside STEM, then we lose the depth of analysis that the humanities bring. That is why she proposes “artificial humanities” as a framework: not to comment on technology after the fact, but to stay in the room while it is being shaped.

Antoniak and her co-authors argue that generative AI has been shaped by disciplinary inputs that are still too narrow, and that humanities research remains underrepresented in how these systems are developed and discussed. Their line, “models make words, but people make meaning,” gets to the heart of it. A model can generate output, but it does not assume responsibility for meaning, context, interpretation, authorship, or culture.

People do. Artists do. That is exactly why artists should not be pushed out of this discussion.

So my answer would be that Concordia can justify offering a course like this precisely because Fine Arts students need a place where these tools can be examined critically, rigorously, and with artistic judgment still at the center. If artists and humanists leave the room, then decisions about creativity, culture, and authorship are left mostly to technical optimization and commercial platforms.

I do not think that protects artists. I think it weakens their position.

A Fine Arts course is one of the right places to ask the harder questions: what kind of work is being produced, what values are being encoded, what is ethically acceptable, and where human authorship remains non-negotiable. That, to me, is the strongest justification.

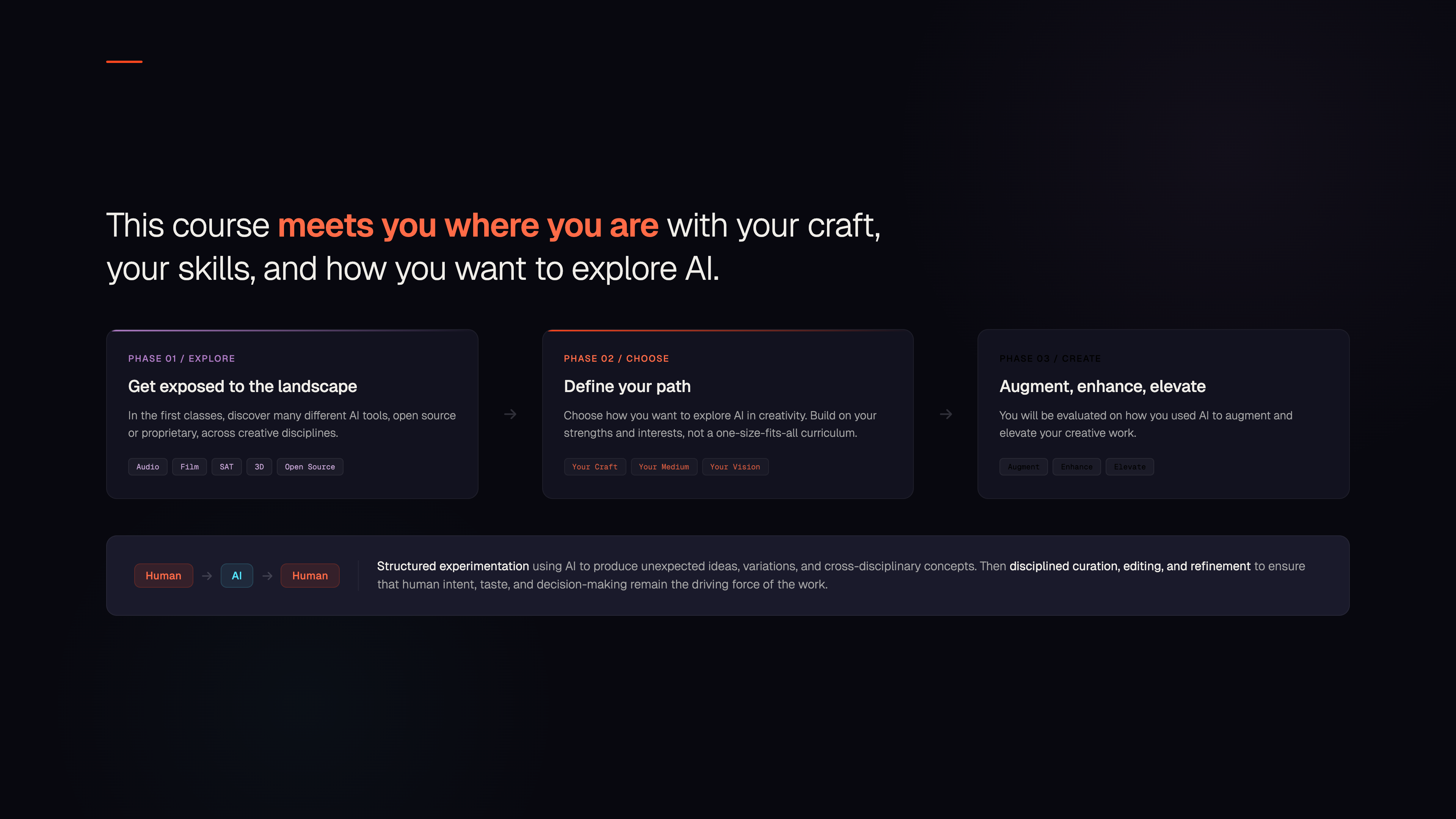

I think it is important to be very clear about what this course is not. It is not a course on prompting for its own sake. It is not a class on how to make better Midjourney images. If that were the goal, it would reduce art education to surface-level output and completely miss what is actually at stake for artists right now.

What interests me is almost the opposite.

Creativity is still the engine of differentiation. In culture, in products, in brands, in science, in careers.

Generative AI collapses the cost of making drafts: words, images, audio, code, prototypes. But when “making” becomes cheap, meaning becomes scarce. And when output becomes infinite, taste becomes the bottleneck. Does this have creative value?

And who can answer this question? It is not simply “people who use AI tools.” It will be the people and institutions who can:

- reliably “mine” the AI black box (latent space) for surprising, high-value directions,

- translate those directions into shared meaning so collaborators and audiences can align,

- turn the best results into systems that compound instead of resetting every project.

It is about new frameworks for thinking, new methods of critique, new ways of working, and a much higher standard for selection and authorship.

Now, how will this course ensure that the promotion of generative AI as a tool for artists remains “ethical”?

Part of the answer is that ethics has to become a practical method. Students must be taught that AI is not a neutral tool, but a system shaped by human data, historical bias, and embedded assumptions. That means learning to ask concrete questions before using any tool: What role is this system actually playing in the process? Where was it trained? What kinds of biases or flattening effects might it produce? And how much trust should be placed in its output without human oversight?

One of the methods I am currently evaluating is taking the course in the direction of a framework such as SIFT, although its precise role still remains to be determined. SIFT stands for Specify, Identify, Focus, and Trust. It offers a practical way of asking students to clarify the role AI is playing in a creative process, identify where the system and its training data come from, focus on the limits and possible biases of its outputs, and decide what level of trust is appropriate while keeping human oversight in place.

Our SAT partnership helps make that ethical framework concrete. The SAT’s technology vision is built around open and interoperable systems, user-centered design, and a commitment to durability and social impact. Its tool ecosystem is designed to let artists connect systems, shape their own workflows, and remain focused on creation rather than disappear inside opaque platforms. That is important ethically, because it shifts the conversation from “using AI” in the abstract to building accountable, critically informed creative processes.

The SAT’s own technologies illustrate that orientation. LivePose, for example, is a movement-detection tool that generates network events artists can use in other creative environments via OSC or WebSocket. Scenic supports telepresence and hybrid performance. Ossia is a free, open-source tool for orchestration and interactive scenarization. Puara supports modular interactivity across connected devices. These are not examples of handing authorship over to a machine. They are examples of artists using computational systems as components inside larger human-led creative setups.

It is built around giving artists the critical and technical agency to decide how, when, and whether these systems belong in their work.

Now, let me be practical. I’m a proud Concordia Film Production graduate, and what stayed with me from that education was never just the tools. In fact, almost every tool I learned at school has been radically transformed by new technologies over the years. What made Concordia so valuable was not that it trained me on a specific technical moment, but that it taught me ways of thinking, working, questioning, and creating that remained useful long after the tools themselves changed.

Many students leaving university will enter an industry where AI is increasingly built into the professional tools they are expected to use.

Let’s take editing. Adobe now describes Quick Cut as an AI-assisted feature that helps assemble a structured first cut from uploaded media. That matters because it shows AI is no longer a side conversation or a fringe tool. It is being integrated directly into mainstream industry software.

But at the same time, working editors are already pointing out that these are first-draft editing tools: they may help with rough assembly, clipping, captions, organization, and repetitive labor, but they do not replace the editor’s real value.

They do not replace emotional timing, rhythm, narrative judgment, or the instinct to hold on a face a fraction longer because that is where the meaning is.

An MIT Technology Review article, How AI can help supercharge creativity, sharpens that point by arguing that the most interesting uses of AI are not about one-click replacement, but about preserving friction, surprise, challenge, and human direction inside the process.

So for students who may go on to work in film, editing, or post, the question is not whether they can avoid these systems entirely. They will encounter them.

The real question is whether they are being prepared to use them critically, knowing what these tools accelerate, what they flatten, and where human craft still has to lead.

So yes, Concordia should provide a course like this. Not because generative AI is ethically resolved, but because it is not.

I’ll leave you with a quote from this article, published in Science:

If we want to live in a world in which there is room for future Dalís and not just new iterations of DALL-E, we need less moral panic about “creative AI” and more sensible policy work around creative education, art institutions, and funding models that ensure such practices are not governed only by the logic of the financial markets or the optimization efforts of Big Tech.

That is why this course belongs in a Fine Arts faculty, not in a computer science department.

Thank you,